FaceNet: A Unified Embedding for Face Recognition and Clustering

Give the formula for the Triplet Loss.

where is the an anchor input (e.g. an image of a specific person), is a positive sample (meaning that it belongs to the same class as ) and is a negative sample (i.e. from a different class). is a margin that is enforced between positive and negative pairs.

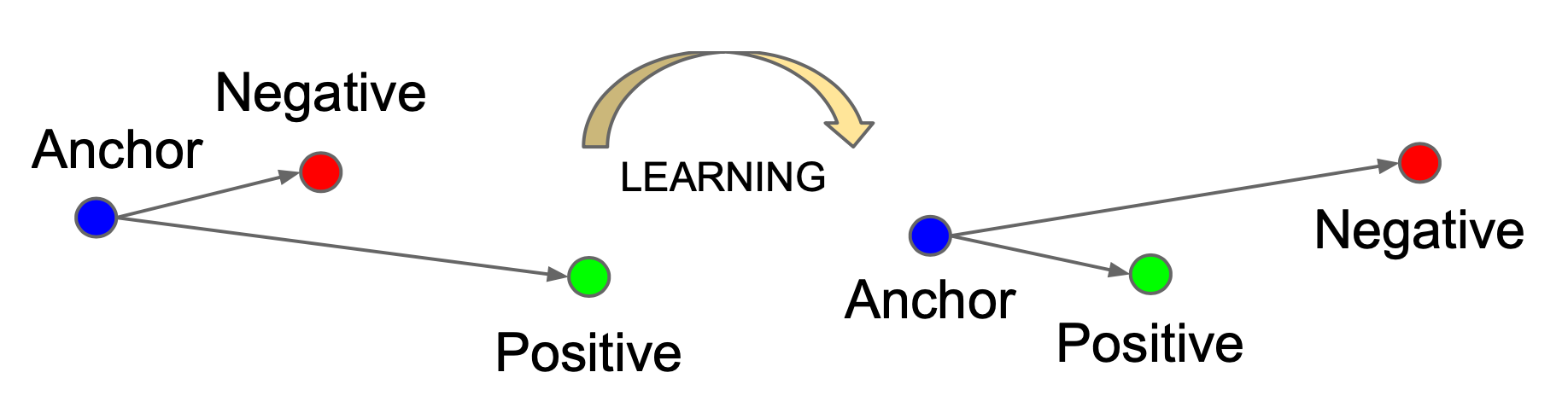

Illustration: The Triplet Loss minimizes the distance between an anchor and a positive, both of which have the same identity, and maximizes the distance between the anchor and a negative of a different identity.

Illustration: The Triplet Loss minimizes the distance between an anchor and a positive, both of which have the same identity, and maximizes the distance between the anchor and a negative of a different identity.

What is an important limitation of the triplet loss function?

It requires large batches in the order of a few thousand examples. In order to have meaningful positive and negative paris, a minimal samples of each class need to be present in each batch.

In triplet loss, how are the anchor , positive and negative triplets selected?

Using large batches, all anchor-positive pairs are selected. Negatives are selected such that . These negatives are called semi-hard.