LoRA: Low-Rank Adaptation of Large Language Models

How does LoRA work?

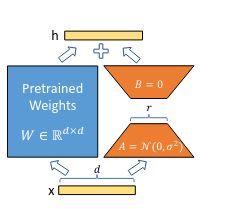

LoRA freezes the pre-trained model weights and injects trainable rank decomposition matrices into each layer of the Transformer architecture.

They use a random Gaussian initialization for and zero for , so is zero at the beginning of training.