Slimmable Neural Networks

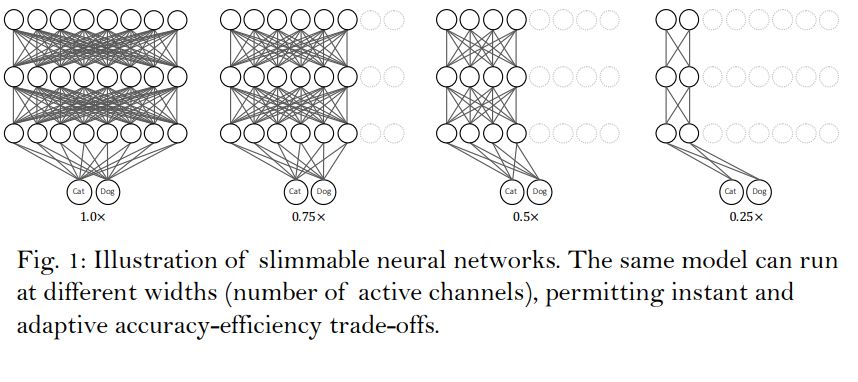

What are Slimmable Neural Networks?

A Slimmable Neural Network is a single neural network executable at different widths (number of active channels).

This permits instant and adaptive accuracy-efficiency trade-offs at runtime.

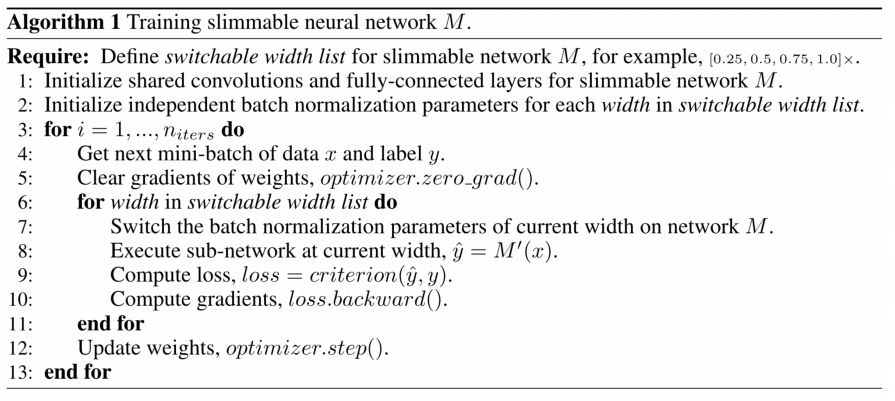

What is the training algorithm to train Slimmable Neural Networks?

Or in other words: apply each batch over the slimmed networks (which share weights for each layer but have an individual batchnorm layer) and accumulate the loss. Do a weight update at the end.

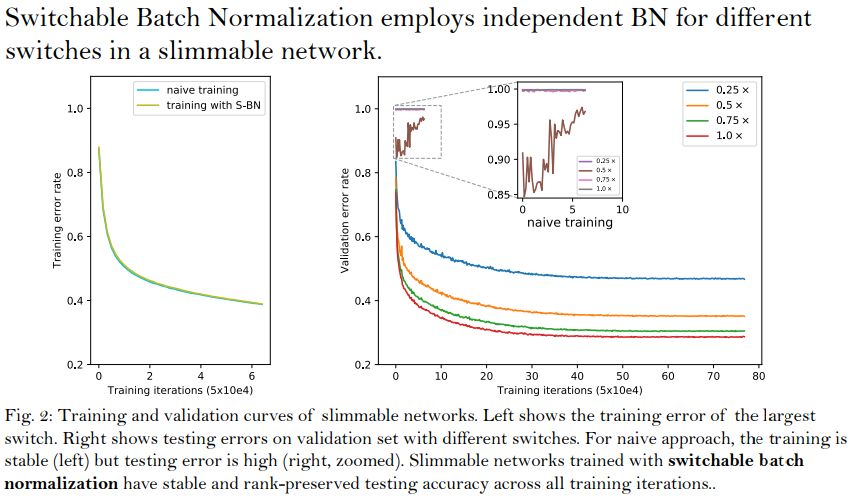

What was the main difficulty to make Slimmable Neural Networks work and how was it solved?

For each layer, different channels/switches result in different means and variances of the aggregated feature, which are then rolling averaged to a shared batch normalization layer. The inconsistency leads to inaccurate batch normalization statistics in a layer-by-layer propagating manner.

Note that these batch normalization statistics (moving averaged means and variances) are only used during testing, in training the means and variances of the current mini-batch are used.

The solution was the introduction of Switchable Batch Normalization (S-BN), that employs independent batch normalization for different switches in a slimmable network.