High-Resolution Image Synthesis with Latent Diffusion Models

Give an overview of the Latent Diffusion Model (Stable Diffusion architecture).

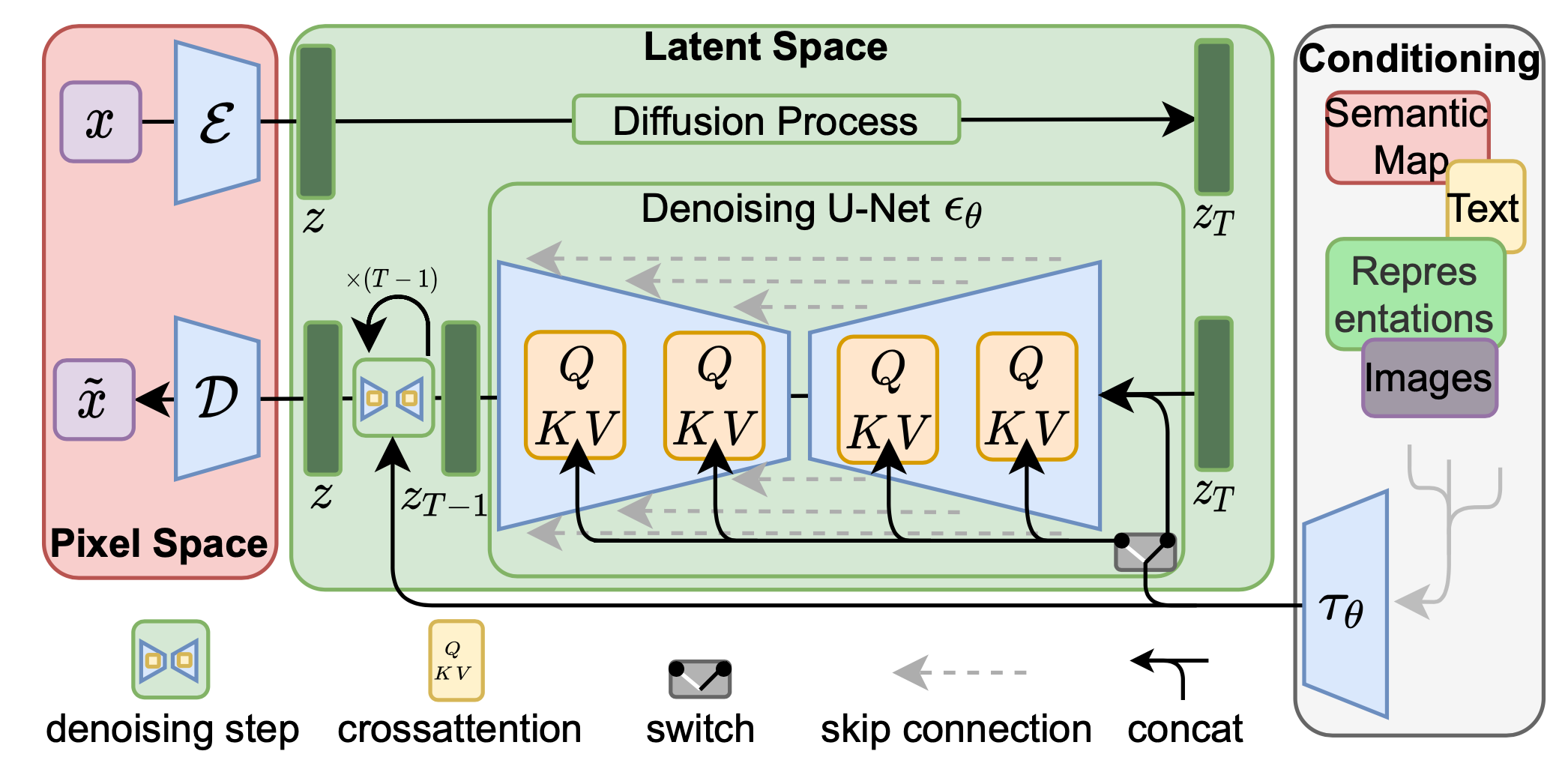

An encoder is used to compress the input image to a smaller 2D latent vector , where the downsampling rate . A decoder reconstructs the images from the latent vector: .

The diffusion and denoising processes happen on the latent vector .

The denoising model is a time-conditioned U-Net, augmented with the cross-attention mechanism to handle flexible conditioning information for image generation.

To process from various modalities, a domain specific encoder that projects to an intermediate representation is used.

An encoder is used to compress the input image to a smaller 2D latent vector , where the downsampling rate . A decoder reconstructs the images from the latent vector: .

The diffusion and denoising processes happen on the latent vector .

The denoising model is a time-conditioned U-Net, augmented with the cross-attention mechanism to handle flexible conditioning information for image generation.

To process from various modalities, a domain specific encoder that projects to an intermediate representation is used.

The cross-attention mechanism is defined as:

where the projections are:

with dimensions:

where denotes a (flattened) intermediate representation of the UNet implementation .

How is the latent diffusion model trained?

The compression part (e.g. encoder and decoder ) and diffusion part are trained in different phases (first compression then diffusion).

Usually the compression part is taken from a pretrained network such as CLIP.

How does the latent diffusion model avoid arbitratily high-variance latent spaces?

It proposes two variants to deal with this:

- KL-reg: a small Kullback-Leibler penalty is imposed towards a standard normal distribution over the learned latent, similar to a VAE.

- VQ-reg: a vector quantization layer is used within the decoder, similar to VQVAE but the quantization layer is absorbed by the decoder.